AI risks in virtualized environments are becoming an enterprise concern as AI tools move deeper into daily operations. AI systems now operate inside marketing, HR, finance, legal, and engineering workflows, while development teams rely on coding assistants with access to repositories, CI/CD pipelines, and cloud environments.

As these systems move from text generation to action, the risk shifts. The concern is no longer just data leakage or incorrect output, but runtime access, privileged execution, and proximity to critical virtualized infrastructure.

The Expanding Attack Surface

The attack surface expands as AI systems gain the ability to autonomously invoke tools, integrate with enterprise infrastructure, execute generated code, and access privileged systems and sensitive data. These capabilities place AI inside trusted environments, increasing the likelihood that attackers can exploit weaknesses to reach critical assets.

AI‑Specific Weaknesses That Increase Virtualization Risk

Several AI‑specific weaknesses now shape risk inside virtualized environments:

- Prompt injection and indirect prompt injection

- Tool misuse within allowed permissions

- Excessive trust in model output during autonomous execution

- Weak validation of agent actions before execution

These weaknesses matter because attackers can manipulate how agents or models sequence actions, not just what data they return. By abusing legitimate access and approved tools, they can operate entirely within expected workflows — making malicious activity harder to detect and more dangerous once it occurs.

Get Threat Intel and Security Updates Delivered to Your Inbox.

Why Orchestration Agents Are High Value Targets

Orchestration agents are high‑value targets because they centralize access to sensitive systems. They often touch source code, APIs, infrastructure tooling, internal documents, and cloud resources, and frequently run as long‑lived services with broad permissions.

At this point, the agent is not the real prize. The environment it can reach is.

This marks a key shift in AI risks in virtualized environments. Attackers focus less on the model itself and more on what it can influence — infrastructure access, credential exposure, privileged system actions, and opportunities for lateral movement inside virtual machines.

How Threat Actors Exploit AI Risks in Virtualized Environments

Threat activity is already moving in this direction.

Supply Chain Poisoning and AI‑Driven Workflows

Attackers poison AI‑adjacent supply chains through malicious libraries, dependency confusion, compromised plugins, or tampered model‑related artifacts. These techniques influence what agents install, execute, or trust.

Incidents involving agent ecosystems such as OpenClaw show how AI integrations can become direct attack paths. Each plugin, dependency, or integration expands the trust boundary inside enterprise environments.

Credential Theft Through Legitimate Agent Execution

AI systems can unintentionally expose credentials through malware embedded in generated code, secrets revealed during automated workflows, or unauthorized access to API tokens during agent execution. Prompt injection can further bypass guardrails, allowing attackers to operate within the scope of authorized activity — often without triggering alerts.

Runtime Exploitation Instead of Model Abuse

A critical shift is underway from model manipulation to runtime exploitation. Research shows autonomous agents can identify and exploit sandbox and runtime weaknesses when vulnerabilities exist, particularly in misconfigured environments.

The question is no longer whether a model can be manipulated, but whether AI‑driven execution can be used to reach vulnerable runtimes inside virtual machines and exploit them. That is a direct AI risk in virtualized environments.

Why AI Risks Escalate to the Hypervisor Layer

AI‑powered environments turn the hypervisor into a critical security boundary. While AI systems may not attack hypervisors directly, their adoption increases high‑privilege workloads, automated code execution, and exposure of credentials and infrastructure APIs.

This combination increases the probability that attackers establish persistence inside virtual machines and reach workloads capable of compromising the hypervisor. With more than 60% of all workloads already virtualized, AI adoption acts as an infrastructure risk multiplier.

The Security Implication for Virtualized Environments

AI‑driven automation is pushing attackers closer to infrastructure layers that traditional security tools do not typically monitor. As a result, effective defense now requires visibility and control beneath the guest OS, at the hypervisor layer itself.

Recognizing this, experts such as Google Mandiant have advocated for a strategic shift: moving away from endpoint detection and response (EDR)-centric threat hunting toward proactive, infrastructure-focused defense strategies.

That shift sets the stage for preemptive hypervisor security and runtime defense.

Reducing AI Risks in Virtualized Environments with ZeroLock®’s Preemptive Runtime Defense

ZeroLock applies preemptive security directly at the hypervisor layer, where AI‑driven workloads execute.

- PERFORM: Low‑overhead design with flexible deployment, a signed Broadcom VIB, and vCenter integration

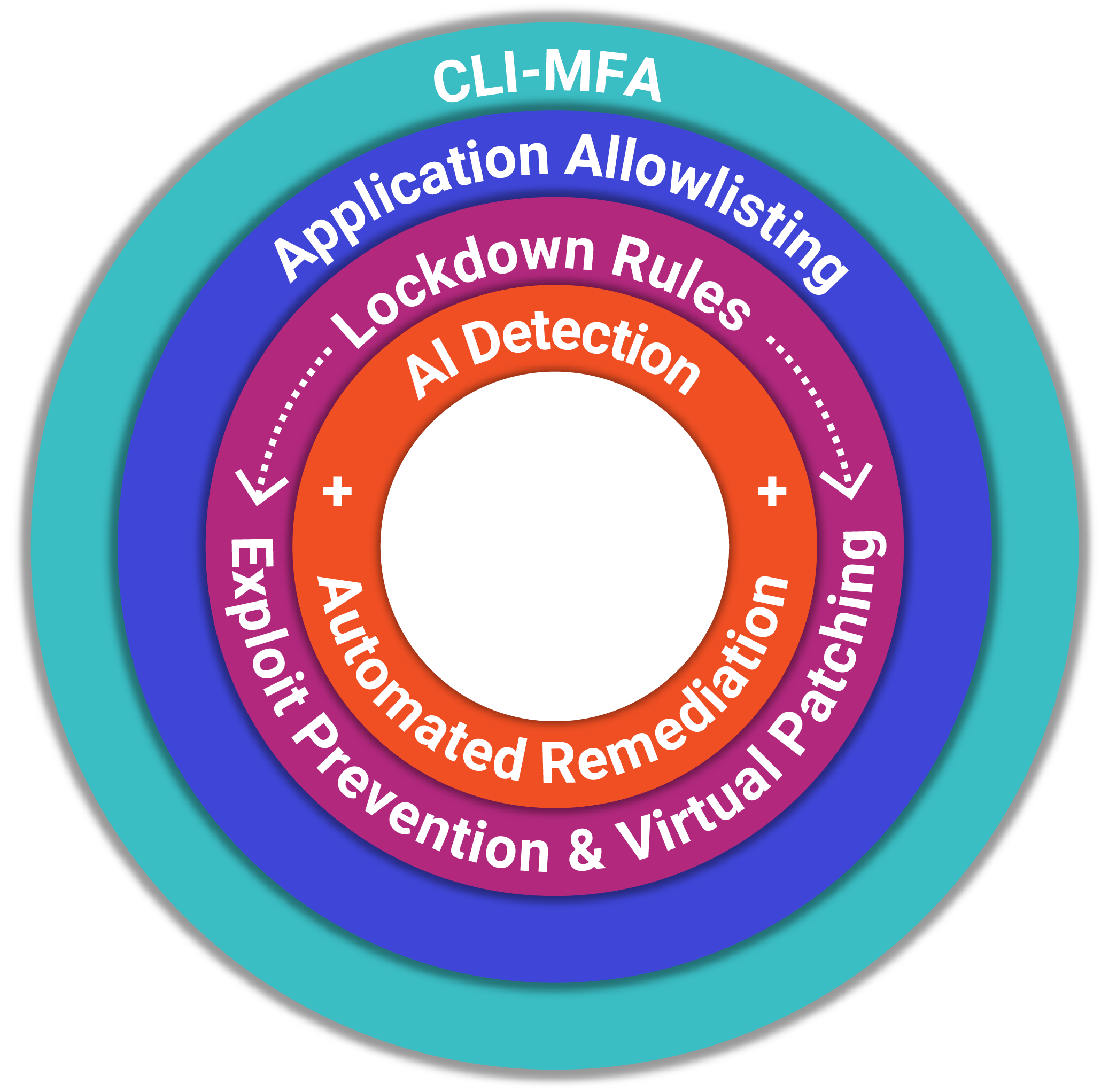

- PREEMPT: CLI‑MFA, application allowlisting, anti‑tampering, exploit prevention, and virtual patching to stop attacks before execution

- PROTECT: AI‑driven detection and automated remediation to stop attacks in real time and restore affected files

By operating below the guest OS, ZeroLock reduces attacker dwell time and limits blast radius — even when trusted access is abused.

Final Thoughts

AI risks in virtualized environments are redefining enterprise security. As AI agents gain deeper access to code, tools, and infrastructure, they open new pathways for attackers straight to critical virtual workloads and credentials. The question is not whether AI will attack hypervisors directly, but how quickly unchecked AI adoption can expose the systems enterprises depend on most.